A brief introduction to generative diffusion modeling is provided in this blog post. In particular, the discussion focuses on the denoising diffusion probabilistic model (DDPM) [Sohl-Dickstein et al., 2015; Ho et al., 2020]. The relation to other generative modeling approaches such as energy-based models (EBMs), variational autoencoders (VAEs) or normalizing flows is emphasized in various review papers [Bond-Taylor et al., 2022; Luo, 2022]. Excellent explanations can also be found in the two blog posts [Lilian Weng, 2021; Angus Turner, 2021].

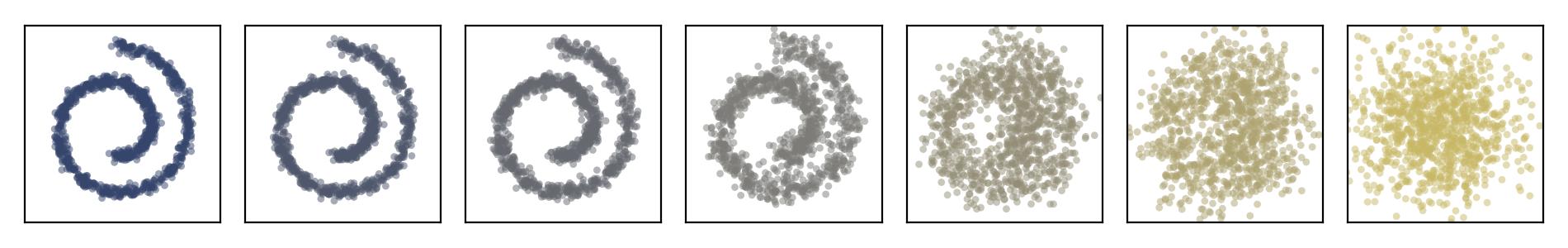

Noising process applied to a Swiss roll dataset:

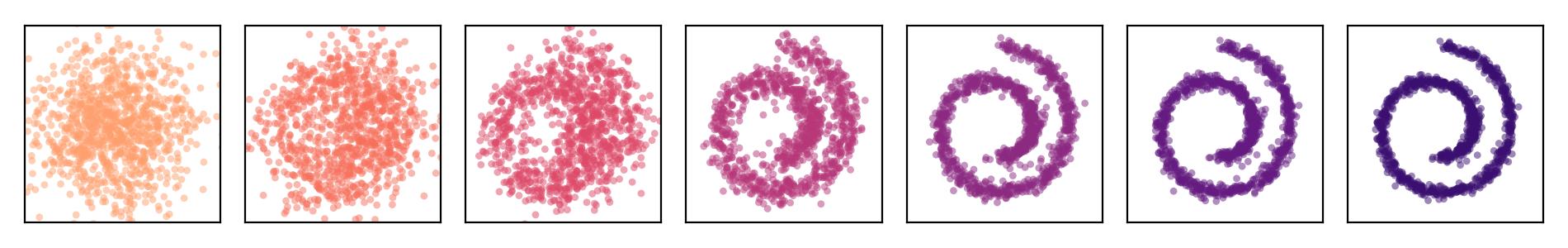

Corresponding trained generative process:

Corresponding trained generative process:

Noising process applied to a single MNIST digit:

Corresponding trained generative process:

Forward and reverse diffusion

A generative diffusion model usually consists of two processes. They transform between pure noise and data samples from the target distribution in a random fashion. The forward diffusion process gradually corrupts data by injecting noise. It is modeled as a Markov chain with transition kernel \(q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1})\). Conditioned on a given a sample \(\boldsymbol{x}_0\), the density can be written as

\[q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) = \prod_{t=1}^T q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1}).\]Including the unknown data distribution of \(\boldsymbol{X}_0 \sim q(\boldsymbol{x}_0)\), the joint density is \(q(\boldsymbol{x}_{0:T}) = q(\boldsymbol{x}_0) \prod_{t=1}^T q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1})\).

Vice versa, the reverse diffusion process gradually denoises unstructured noise from a fixed prior distribution \(p(\boldsymbol{x}_T)\) into a data sample. It is a learnable Markov chain that evolves backwards in time. Its transition kernels \(p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t)\) are parametrized by trainable parameters \(\boldsymbol{\theta}\). The joint density of the reverse process is given as

\[p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T}) = p(\boldsymbol{x}_T) \prod_{t=1}^T p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t).\]Integrating this density over the latent variables yields the marginal of a data point \(p_{\boldsymbol{\theta}}(\boldsymbol{x}_0) = \int p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T}) \, \mathrm{d} \boldsymbol{x}_{1:T}\).

Training objective

Unfortunately, the latter density \(p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)\) does not lend itself to maximum likelihood estimation for finding \(\hat{\boldsymbol{\theta}} = \mathrm{argmax}_{\boldsymbol{\theta}} \, \sum_{i=1}^N \log p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0,i})\) directly. The integral cannot be evaluated easily and is therefore intractable. For example, Monte Carlo simulation would suffer from a high variance which makes it very inefficient. Here, most samples generated from the prior would feature a low likelihood value.

One can, however, derive and optimize a variational bound of the likelihood [Sohl-Dickstein et al., 2015]. Following from Jensen’s inequality, for the marginalized data distribution one has

\[\begin{align*} \log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0) &= \log \int p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T}) \, \mathrm{d} \boldsymbol{x}_{1:T} = \log \int \frac{p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T}) q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} {q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} \, \mathrm{d} \boldsymbol{x}_{1:T} \\ &= \log \mathbb{E}_{q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} \left[ \frac{p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T})} {q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} \right] \geq \mathbb{E}_{q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} \left[ \log \frac{p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T})} {q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} \right]. \end{align*}\]Hence, such a bound \(L\) with \(\mathbb{E}_{q(\boldsymbol{x}_0)}[-\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)] \leq L\) is indeed established by

\[L = \mathbb{E}_{q(\boldsymbol{x}_{0:T})} \left[ - \log \left( \frac{p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T})} {q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} \right) \right] = \mathbb{E}_{q(\boldsymbol{x}_{0:T})} \left[ - \log p(\boldsymbol{x}_T) - \sum_{t=1}^T \log \left( \frac{p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t)} {q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1})} \right) \right].\]With this definition, the DDPM training task can be formulated as the optimization problem \(\hat{\boldsymbol{\theta}} = \mathrm{argmin}_{\boldsymbol{\theta}} \, L\). Instead of minimizing the intractable negative log-likelihood, an upper bound of it is minimized.

Exploiting the Markov property and Bayes’ theorem in the form of \(q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1}) = q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1}, \boldsymbol{x}_0) = \frac{q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t, \boldsymbol{x}_0) \, q(\boldsymbol{x}_t \vert \boldsymbol{x}_0)}{q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_0)}\), one can rewrite the variational bound in a more interpretable and better computable way:

\[L = \mathbb{E}_{q(\boldsymbol{x}_{0:T})} \Bigg[ \underbrace{D_{\mathrm{KL}}(q(\boldsymbol{x}_T \vert \boldsymbol{x}_0) \, \| \, p(\boldsymbol{x}_T))}_{L_T} + \sum_{t=2}^T \underbrace{D_{\mathrm{KL}}(q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t, \boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t))}_{L_{t-1}} \underbrace{-\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0 \vert \boldsymbol{x}_1)}_{L_0} \Bigg].\]The KL divergence \(L_T\) quantifies how different the complete diffusion process \(q(\boldsymbol{x}_T \vert \boldsymbol{x}_0)\) is from the pure noise prior \(p(\boldsymbol{x}_T)\). As long as the forward process is not learnable, this term does not depend on \(\boldsymbol{\theta}\) and can thus be neglected. The terms \(L_1, \ldots, L_{T-1}\) penalize the difference between \(p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t)\) and the posterior of the diffusion process \(q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t, \boldsymbol{x}_0)\). They can be seen as a kind of consistency loss. The remaining \(L_0\) is a reconstruction-like loss.

Relation to VAEs

Note that \(L_0\) and \(L_T\) are loss terms that are also encountered for a VAE. It therefore seems instructive to investigate the connection to VAEs more closely at this point. Let us consider the Kullback-Leibler (KL) divergence between the conditional distribution \(q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)\) of the forward diffusion process and the posterior \(p_{\boldsymbol{\theta}}(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) = p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T}) / p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)\) of the reverse process:

\[\begin{align*} D_{\mathrm{KL}}(q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)) &= \int q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) \log \left( \frac{q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} {p_{\boldsymbol{\theta}}(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)} \right) \, \mathrm{d} \boldsymbol{x}_{1:T} = \int q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) \log \left( \frac{\prod_{t=1}^T q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1})} {p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0:T}) / p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)} \right) \, \mathrm{d} \boldsymbol{x}_{1:T} \\ &= \int q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) \left( \log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0) - \log p(\boldsymbol{x}_T) + \sum_{t=1}^T \log \left( \frac{q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1})} {p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t)} \right) \right) \, \mathrm{d} \boldsymbol{x}_{1:T} \\ &= \log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0) - \mathbb{E}_{q(\boldsymbol{x}_{1:T})} \left[ \log p(\boldsymbol{x}_T) + \sum_{t=1}^T \log \left( \frac{p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t)} {q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1})} \right) \right]. \end{align*}\]By additionally averaging over the data distribution \(q(\boldsymbol{x}_0)\) one sees that \(L = \mathbb{E}_{q(\boldsymbol{x}_0)}[-\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)] + \mathbb{E}_{q(\boldsymbol{x}_0)}[ D_{\mathrm{KL}}(q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0))]\). The inequality from above then simply follows from \(D_{\mathrm{KL}}(q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0)) \geq 0\). Moreoever, one can now argue that minimizing \(L\) with respect to \(\boldsymbol{\theta}\) amounts to maximizing \(\mathbb{E}_{q(\boldsymbol{x}_0)}[\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)]\) and minimizing \(\mathbb{E}_{q(\boldsymbol{x}_0)}[D_{\mathrm{KL}}(q(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_{1:T} \vert \boldsymbol{x}_0))]\) at the same time. This is completely analogous to the VAE.

Hence, a DDPM can be seen as a certain hierarchically defined VAE [Luo, 2022]. Both encoder and decoder have a Markovian structure. The encoder is predefined, instead of being learned from the data. It does not perform any dimension reduction and usually transforms to pure random noise. The DDPM latent space does therefore not play the same role as the latent VAE representation (mean or random sample of the learned posterior).

As an aside, the expected value \(\mathbb{E}_{q(\boldsymbol{x}_0)}[\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)]\) is normally used to show the equivalence of maximizing the log-likelihood and minimizing the KL divergence \(D_{\mathrm{KL}}(q(\boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)) = H[q(\boldsymbol{x}_0)] - \mathbb{E}_{q(\boldsymbol{x}_0)}[\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)]\). Here, the approximation \(\mathbb{E}_{q(\boldsymbol{x}_0)}[\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0)] \approx \frac{1}{N} \sum_{i=1}^N \log p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0,i})\) also clarifies the connection to the log-likelihood \(\sum_{i=1}^N \log p_{\boldsymbol{\theta}}(\boldsymbol{x}_{0,i})\).

Normal distributions

Both the forward and the reverse process can be constructed on the basis of Gaussian distributions. As usual, this greatly simplifies the analysis. As for the diffusion process, a natural choice for the Markov transition kernel is

\[q(\boldsymbol{x}_t \vert \boldsymbol{x}_{t-1}) = \mathcal{N} \left( \boldsymbol{x}_t \vert \sqrt{1-\beta_t} \boldsymbol{x}_{t-1}, \beta_t \boldsymbol{I} \right).\]Here, \(\beta_t \in (0,1)\) specifies the noise variance. It can be set to a constant or one can assume a certain variance schedule. For example, increasing variances with \(0 < \beta_1 < \ldots < \beta_T < 1\) are a common specification. More details can be found in the dedicated section below. Either way, the density \(q(\boldsymbol{x}_t \vert \boldsymbol{x}_0)\) for all \(t \in \{1,\ldots,T\}\) can be calculated as

\[q(\boldsymbol{x}_t \vert \boldsymbol{x}_0) = \mathcal{N} \left( \boldsymbol{x}_t \vert \sqrt{\bar{\alpha}_t} \boldsymbol{x}_0, (1-\bar{\alpha}_t) \boldsymbol{I} \right), \quad \bar{\alpha}_t = \prod_{s=1}^t \alpha_s, \quad \alpha_t = 1-\beta_t.\]For \(\sqrt{\bar{\alpha}_T} \to 0\) one can easily see that \(q(\boldsymbol{x}_T \vert \boldsymbol{x}_0) \to \mathcal{N}(\boldsymbol{x}_T \vert \boldsymbol{0}, \boldsymbol{I})\) approaches a Gaussian with zero mean and unit variance. It is noteworthy that this distribution does not depend on the initial state \(\boldsymbol{x}_0\) any longer.

Realizations of the Markov chain can be obtained by iteratively performing state updates. Here, the new state \(\boldsymbol{x}_t = \sqrt{1-\beta_t} \boldsymbol{x}_{t-1} + \sqrt{\beta_t} \boldsymbol{\epsilon}\) emerges from the previous one \(\boldsymbol{x}_{t-1}\) by incorporating random noise \(\boldsymbol{\epsilon}\) that is randomly sampled from a standard normal distribution \(\mathcal{N}(\boldsymbol{\epsilon} \vert \boldsymbol{0}, \boldsymbol{I})\). Similarly, one can simulate any state

\[\boldsymbol{x}_t = \sqrt{\bar{\alpha}_t} \boldsymbol{x}_0 + \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon}\]directly from the initial \(\boldsymbol{x}_0\) without having to perform all intermediate steps as above.

Another appealing consequence of the Gaussian design choice is that the conditional distributions \(q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t, \boldsymbol{x}_0)\), which are occurring in the loss function above, are Gaussian as well. While the derivation is not important here, the result is provided for the sake of completeness:

\[\begin{align*} q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t, \boldsymbol{x}_0) &= \mathcal{N} \left( \boldsymbol{x}_{t-1} \vert \tilde{\boldsymbol{\mu}}_t (\boldsymbol{x}_t, \boldsymbol{x}_0), \tilde{\beta}_t \boldsymbol{I} \right), \\ \tilde{\boldsymbol{\mu}}_t (\boldsymbol{x}_t, \boldsymbol{x}_0) &= \frac{\sqrt{\alpha_t} (1-\bar{\alpha}_{t-1})}{1-\bar{\alpha}_t} \boldsymbol{x}_t + \frac{\sqrt{\bar{\alpha}_{t-1}} \beta_t}{1-\bar{\alpha}_t} \boldsymbol{x}_0, \\ \tilde{\beta}_t &= \frac{1-\bar{\alpha}_{t-1}}{1-\bar{\alpha}_t} \beta_t. \end{align*}\]In view of the computation of \(L_{t-1} = \mathbb{E}_{q(\boldsymbol{x}_0, \boldsymbol{x}_t)}[D_{\mathrm{KL}}(q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t, \boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t))]\), it is beneficial to model the reverse process by Gaussian distributions, too. A standard normal prior \(p(\boldsymbol{x}_T) = \mathcal{N}(\boldsymbol{x}_T \vert \boldsymbol{0}, \boldsymbol{I})\) is usually considered together with learnable Gaussian transition densities

\[p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t) = \mathcal{N}(\boldsymbol{x}_{t-1} \vert \boldsymbol{\mu}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t), \sigma_t^2 \boldsymbol{I}).\]A neural network \(\boldsymbol{\mu}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t)\) parametrizes the mean value, whereas the standard choice for the variances are fixed values \(\sigma_t^2 = \beta_t\) or \(\sigma_t^2 = \tilde{\beta}_t\). Of course, one could generalize this approach by assuming learnable variances or more complex covariance models \(\boldsymbol{\Sigma}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t)\) [Nichol and Dhariwal, 2021].

Further simplifications

The KL divergence between two multivariate Gaussian distributions is analytically available. This can be readily exploited in the computation of the loss terms \(L_{t-1}\) for \(t = 2,\ldots,T\). For instance, with \(\sigma_t^2 = \tilde{\beta}_t\) one would have

\[L_{t-1} = \mathbb{E}_{q(\boldsymbol{x}_0, \boldsymbol{x}_t)}[ D_{\mathrm{KL}}(q(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t, \boldsymbol{x}_0) \, \| \, p_{\boldsymbol{\theta}}(\boldsymbol{x}_{t-1} \vert \boldsymbol{x}_t))] = \mathbb{E}_{q(\boldsymbol{x}_0, \boldsymbol{x}_t)} \left[ \frac{1}{2 \sigma_t^2} \left\lVert \tilde{\boldsymbol{\mu}}_t(\boldsymbol{x}_t, \boldsymbol{x}_0) - \boldsymbol{\mu}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t) \right\rVert^2\right] + \text{const.},\]where terms that do not depend on \(\boldsymbol{\theta}\) have been omitted. By minimizing the mean squared error, the neural network \(\boldsymbol{\mu}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t)\) can be trained to predict the posterior mean \(\tilde{\boldsymbol{\mu}}_t (\boldsymbol{x}_t, \boldsymbol{x}_0)\) of the forward process.

A reparametrization of this model has been proposed in [Ho et al., 2020]. Since \(\boldsymbol{x}_t = \sqrt{\bar{\alpha}_t} \boldsymbol{x}_0 + \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon}\) can be used for computing realizations of the forward process, one can also write \(\boldsymbol{x}_0 = \frac{1}{\sqrt{\bar{\alpha}_t}} (\boldsymbol{x}_t - \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon})\). This can be directly plugged into the expression for \(\tilde{\boldsymbol{\mu}}_t (\boldsymbol{x}_t, \boldsymbol{x}_0)\) in order to obtain \(\tilde{\boldsymbol{\mu}}_t (\boldsymbol{x}_t, \boldsymbol{\epsilon}) = \frac{1}{\sqrt{\alpha_t}}(\boldsymbol{x}_t - \frac{\beta_t}{\sqrt{1-\bar{\alpha}_t}} \boldsymbol{\epsilon})\). Motivated by this form, one can parametrize the model as

\[\boldsymbol{\mu}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t) = \frac{1}{\sqrt{\alpha_t}} \left( \boldsymbol{x}_t - \frac{\beta_t}{\sqrt{1-\bar{\alpha}_t}} \boldsymbol{\epsilon}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t) \right).\]Instead of predicting the posterior mean, the newly introduced model \(\boldsymbol{\epsilon}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t)\) predicts the noise \(\boldsymbol{\epsilon}\) that is responsible for the transition from \(\boldsymbol{x}_0\) to \(\boldsymbol{x}_t = \sqrt{\bar{\alpha}_t} \boldsymbol{x}_0 + \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon}\). The loss term for its training is given as

\[L_{t-1} = \mathbb{E}_{q(\boldsymbol{x}_0), \mathcal{N}(\boldsymbol{\epsilon} \vert \boldsymbol{0}, \boldsymbol{I})} \left[ \frac{\beta_t^2}{2 \sigma_t^2 \alpha_t (1-\bar{\alpha}_t)} \left\lVert \boldsymbol{\epsilon} - \boldsymbol{\epsilon}_{\boldsymbol{\theta}} \left( \sqrt{\bar{\alpha}_t} \boldsymbol{x}_0 + \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon}, t \right) \right\rVert^2 \right].\]The remaining term \(L_0 = \mathbb{E}_{q(\boldsymbol{x}_0, \boldsymbol{x}_1)}[-\log p_{\boldsymbol{\theta}}(\boldsymbol{x}_0 \vert \boldsymbol{x}_1)]\) with \(p_{\boldsymbol{\theta}}(\boldsymbol{x}_0 \vert \boldsymbol{x}_1) = \mathcal{N}(\boldsymbol{x}_0 \vert \boldsymbol{\mu}_{\boldsymbol{\theta}}(\boldsymbol{x}_1, 1), \sigma_1^2 \boldsymbol{I})\) can surprisingly, if discarding the normalization, be brought into the same form. An unweighted form of the training objective can then be written as

\[L_{\text{simple}} = \mathbb{E}_{\mathcal{U}(t \vert 1, T), q(\boldsymbol{x}_0), \mathcal{N}(\boldsymbol{\epsilon} \vert \boldsymbol{0}, \boldsymbol{I})} \left[ \left\lVert \boldsymbol{\epsilon} - \boldsymbol{\epsilon}_{\boldsymbol{\theta}} \left( \sqrt{\bar{\alpha}_t} \boldsymbol{x}_0 + \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon}, t \right) \right\rVert^2 \right].\]Here, \(t\) is randomly distributed according to a uniform distribution \(\mathcal{U}(t \vert 1, T)\). The parameters of the model \(\boldsymbol{\epsilon}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t) = \boldsymbol{\epsilon}_{\boldsymbol{\theta}}(\sqrt{\bar{\alpha}_t} \boldsymbol{x}_0 + \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon}, t)\) can be eventually trained by minimizing this loss function. After all, this is an unexpectedly simple training objective.

Noise scheduling

An important practical issue is the noise schedule. It relates to the variances \(\beta_t \in (0,1)\) of the noise in the steps \(\boldsymbol{x}_t = \sqrt{1-\beta_t} \boldsymbol{x}_{t-1} + \sqrt{\beta_t} \boldsymbol{\epsilon}\) of the forward process for all \(t=1,\ldots,T\). While simple constant, linear or quadratic \(\beta_t\)-schedules have been experimented with originally [Ho et al., 2020], more advanced schemes can be used of course.

One may for example assign a certain functional form to \(\bar{\alpha}_t\), rather than fixing the values of \(\beta_t\) directly. Such a choice would more straightforwardly control the characteristics of the aggregated process steps \(\boldsymbol{x}_t = \sqrt{\bar{\alpha}_t} \boldsymbol{x}_0 + \sqrt{1-\bar{\alpha}_t} \boldsymbol{\epsilon}\). In [Nichol and Dhariwal, 2021] an \(\bar{\alpha}_t\)-schedule is proposed that uses a cosine-based form. A similar scheme with a sigmoid-function is employed in [Jabri et al., 2023].

Different schedules can be compared by reference to the signal-to-noise ratio (SNR). It is simply given as \(\mathrm{SNR}(t) = \frac{\bar{\alpha}_t}{1 - \bar{\alpha}_t}\). The SNR measures the strength of the remaining signal in comparison to the level of the noise in each step of the forward process.

Conditioning

An early approach to conditional generation with probabilistic diffusion models is classifier guidance [Dhariwal and Nichol, 2021]. Here, the gradient of an auxiliary classifier is utilized in order to guide the generative sampling process. Classifier-free guidance is a related concept that is based on a certain implicit classifier [Ho and Salimans, 2022].

Beyond simple guiding techniques, one can construct diffusion models that condition properly on arbitrary inputs. A nice review on such conditional diffusion models is provided in [Zhan et al., 2024]. Important applications of conditioning are for example text-to-image synthesis [Rombach et al., 2021], image super-resolution [Saharia et al., 2023] or image-to-image translation [Saharia et al., 2022].

Conditional DDPMs try to learn a conditional data distribution \(q(\boldsymbol{x}_0 \vert \boldsymbol{c})\), rather than an unconditional distribution \(q(\boldsymbol{x}_0)\) as for standard DDPMs. Here, \(\boldsymbol{c}\) denotes some general conditioning input. A neural net \(\boldsymbol{\epsilon}_{\boldsymbol{\theta}}(\boldsymbol{x}_t, t, \boldsymbol{c})\), which is additionally dependent on \(\boldsymbol{c}\), can then be trained by minimizing

\[L_{\text{simple}} = \mathbb{E}_{\mathcal{U}(t \vert 1, T), q(\boldsymbol{x}_t \vert \boldsymbol{c}), q(\boldsymbol{c}), \mathcal{N}(\boldsymbol{\epsilon} \vert \boldsymbol{0}, \boldsymbol{I})} \left[ \left\lVert \boldsymbol{\epsilon} - \boldsymbol{\epsilon}_{\boldsymbol{\theta}} \left( \boldsymbol{x}_t, t, \boldsymbol{c} \right) \right\rVert^2 \right].\]Practically, the \(\boldsymbol{c}\)-dependence can be realized by adding an appropriate embedding, in a channel-wise manner, between the convolutions of the U-net (as it is commonly done for class-conditioning). This is analogous to how the \(t\)-dependence is most often implemented and ingested. Alternatively, for conditions \(\boldsymbol{c}\) that are more complex but \(\boldsymbol{x}\)-like, one may also concatenate the conditioning and the state \(\boldsymbol{x}_t\) along the channel axis (as it can be found in some conditional GANs).